The market for servers and supercomputers still dominated by proposals from Intel and AMD. The x86 architecture is the classic option for manufacturers, who often propose – with few exceptions – solutions based on Intel Xeon and AMD EPYC for their data centers or their supercomputers.

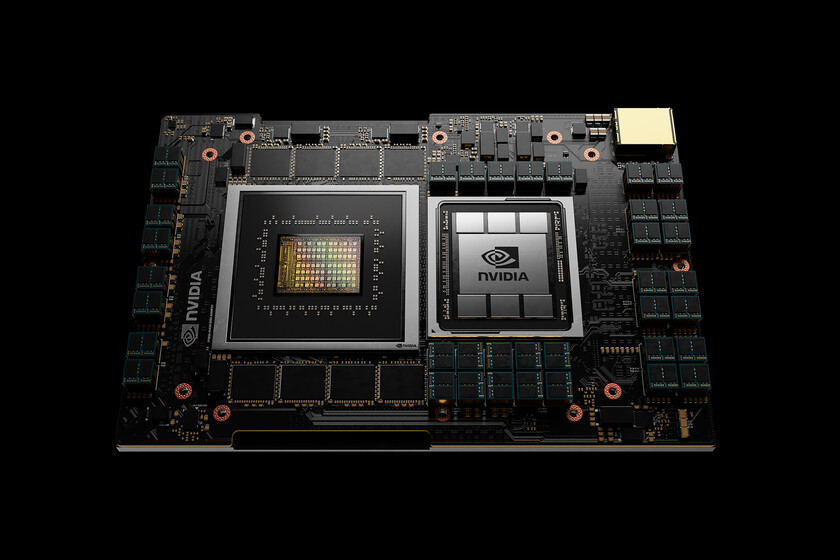

NVIDIA wants that to change, and has released an ambitious CPU based on the ARM architecture called Grace (in honor of Grace Hopper). Although the proposal is very focused on artificial intelligence tasks, this announcement is a clear sign that Intel and AMD should be very vigilant.

Grace pulls muscle when the GPU is not enough

Although NVIDIA GPUs have long provided fantastic capabilities in many fields related to artificial intelligence, this manufacturer wanted to fill that gap and also solve a clear need in these types of scenarios: keep the GPUs well fed when working with these processes.

In fact, in NVIDIA they made clear reference to the problems that x86 CPUs and traditional memories pose when working in this area: the bandwidth of the system is not capable of keeping the GPUs working at full power, and that’s where grace could help.

The processor is capable of enable a 900 GB / s connection between it and NVIDIA GPUs thanks to NVLink 4 technology, which means multiplying by 30 the bandwidth offered with conventional processors. In addition, Grace uses a memory system with LPDDR5x modules to improve both bandwidth and efficiency.

The processor will use a future generation of ARM Neoverse cores, but its true potential will be in those bandwidths that will work hand in hand with NVIDIA GPUs in data centers and supercomputers oriented to the field of artificial intelligence.

We will have to wait to see Grace in action: NVIDIA expects to have this CPU ready in 2023, but they already have customers who will make use of these mikes and who will include them in their future supercomputers. Given the increasing relevance of artificial intelligence, NVIDIA’s gamble threatens the prevalence of Intel’s Xeons and AMD’s EPYCs in the future.

More information | NVIDIA